- AI Software

- C3 AI Applications

- C3 AI Applications Overview

- C3 AI Anti-Money Laundering

- C3 AI Cash Management

- C3 AI CRM

- C3 AI Decision Advantage

- C3 AI Demand Forecasting

- C3 AI Energy Management

- C3 AI ESG

- C3 AI Intelligence Analysis

- C3 AI Inventory Optimization

- C3 AI Process Optimization

- C3 AI Production Schedule Optimization

- C3 AI Property Appraisal

- C3 AI Readiness

- C3 AI Reliability

- C3 AI Smart Lending

- C3 AI Sourcing Optimization

- C3 AI Supply Network Risk

- C3 AI Turnaround Optimization

- C3 AI Platform

- C3 Generative AI

- Get Started with a C3 AI Pilot

- Industries

- Customers

- Resources

- Generative AI

- Generative AI for Business

- C3 Generative AI: How Is It Unique?

- Reimagining the Enterprise with AI

- What To Consider When Using Generative AI

- Why Generative AI Is ‘Like the Internet Circa 1996’

- Can Generative AI’s Hallucination Problem be Overcome?

- Transforming Healthcare Operations with Generative AI

- Data Avalanche to Strategic Advantage: Generative AI in Supply Chains

- Supply Chains for a Dangerous World: ‘Flexible, Resilient, Powered by AI’

- LLMs Pose Major Security Risks, Serving As ‘Attack Vectors’

- C3 Generative AI: Getting the Most Out of Enterprise Data

- The Key to Generative AI Adoption: ‘Trusted, Reliable, Safe Answers’

- Generative AI in Healthcare: The Opportunity for Medical Device Manufacturers

- Generative AI in Healthcare: The End of Administrative Burdens for Workers

- Generative AI for the Department of Defense: The Power of Instant Insights

- What is Enterprise AI?

- Machine Learning

- Introduction

- What is Machine Learning?

- Tuning a Machine Learning Model

- Evaluating Model Performance

- Runtimes and Compute Requirements

- Selecting the Right AI/ML Problems

- Best Practices in Prototyping

- Best Practices in Ongoing Operations

- Building a Strong Team

- About the Author

- References

- Download eBook

- All Resources

- C3 AI Live

- Publications

- Customer Viewpoints

- Blog

- Glossary

- Developer Portal

- Generative AI

- News

- Company

- Contact Us

Glossary

- Artificial Intelligence

- AI in Finance

- Anomaly Detection

- Anti-Money Laundering

- Asset Performance Management

- Asset Reliability

- Digital Disruption

- Digital Transformation

- Digital Twin

- Elastic Cloud Computing

- Energy Management

- Enterprise AI

- Enterprise AI Platform

- Ethical AI

- Inventory Planning

- IoT Platform

- Know Your Customer (KYC)

- Machine Vision (Computer Vision)

- Model-Driven Architecture

- Multi-Cloud

- No Code

- Predictive Analytics

- Predictive Maintenance

- Stochastic Optimization

- Type System

- Data Unification & Management

- Machine Learning (A to L)

- Artificial General Intelligence

- Bias

- Canonical Schema

- Canonical Transform

- Classification

- Classifier

- Classifier Performance

- Clustering

- Coefficient of Discrimination, R-Squared (R2)

- Convolutional Neural Network (CNN)

- Correlation

- Data Cleansing

- Data Labels

- Data Lineage

- Deep Learning

- Dimensionality Reduction

- Explainable AI

- F1 Score

- False Positive Rate

- Feature Engineering

- Feedback Loop

- Field Validation

- Gaussian Mixture Model (GMM)

- Generalized Linear Models

- Gradient-Boosted Decision Trees (GBDT)

- Features

- Ground Truth

- Holdout Data

- Hyperparameters

- Information Leakage

- LIME: Local Interpretable Model-Agnostic Explanations

- Linear Regression

- Loss Function

- Low-Dimensional Representation

- Machine Learning (M to Z)

- Mean Absolute Error

- Mean Absolute Percent Error

- Machine Learning Pipeline

- Model Drift

- Model Prototyping

- Model Training

- Model Validation

- Normalization

- Overfitting

- Precision

- Problem Tractability

- Random Forest

- Recall

- Receiver Operating Characteristic (ROC) Curve

- Regression Performance

- Regularization

- Reinforcement Learning

- Reporting Bias

- Ridge Regression

- Root Mean Square Error (RMSE)

- Selection Bias

- Shapley Values

- Supervised Machine Learning

- Tree-Based Models

- Underfitting

- Unsupervised Machine Learning

- XGBoost

Data Engineer Architect

Who is a Data Engineer Architect?

A data engineer architect is a professional who has the responsibilities and skills of both a data engineer and a data architect. In the current market, these are often two separate professional roles.

A data architect helps conceptualize data frameworks that guide overall data modelling, data warehousing, database management, and any other ETL practices. Per popular definition, a data architect helps design how data is stored, consumed, and integrated for use by different IT systems and any applications that use the data in question.

By comparison, a data engineer helps build the pipelines that ingest, move, store, and prepare data based on the conceptualized data frameworks and architecture. Data engineers generally possess skills related to backend software engineering development and are well-versed with data pipeline management practices.

Why is the Data Engineer Architect Role Important?

With the advent of big data, the complexity of the data pipelines has exponentially increased. As the needs of enterprises continuously evolve, data pipelines and systems have to be agile enough to adapt to changing requirements. The data engineer architect’s role is at the heart of this complexity, not only to conceptualize and build these large-scale pipelines but also to maintain them over time for efficient consumption of compute resources and reliable usage by all applications.

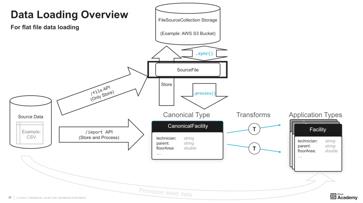

How C3 AI Helps Data Engineer Architects

Data engineer architects can use the C3 AI® Type System to do most of the heavy lifting with respect to data unification and wrangling, through the canonical schema and application types. Through more than 200 pre-built data connectors and domain specific data models, the C3 AI Platform helps define, monitor, and manage data loading, lineage, relationships, and integration for complex source systems. Below is an example of the data loading process facilitated on the C3 AI Platform. The pre-built canonicals, data ingestion pipelines, and domain-specific data models help data engineer architects abstract away the dynamic nature and complexities of underlying source data from the application layer, to focus on delivering data in a consistent, reliable, and timely way.