In previous blog posts, we introduced some core concepts of enterprise AI applications, and how these applications are increasingly crucial for companies to drive their digital transformations and unlock economic value at scale.

In this blog post, we will explore the technical underpinnings of enterprise AI applications. We will dive deeper into the technical requirements of an enterprise AI application and articulate why these requirements are critical to unlocking economic value from digital transformations.

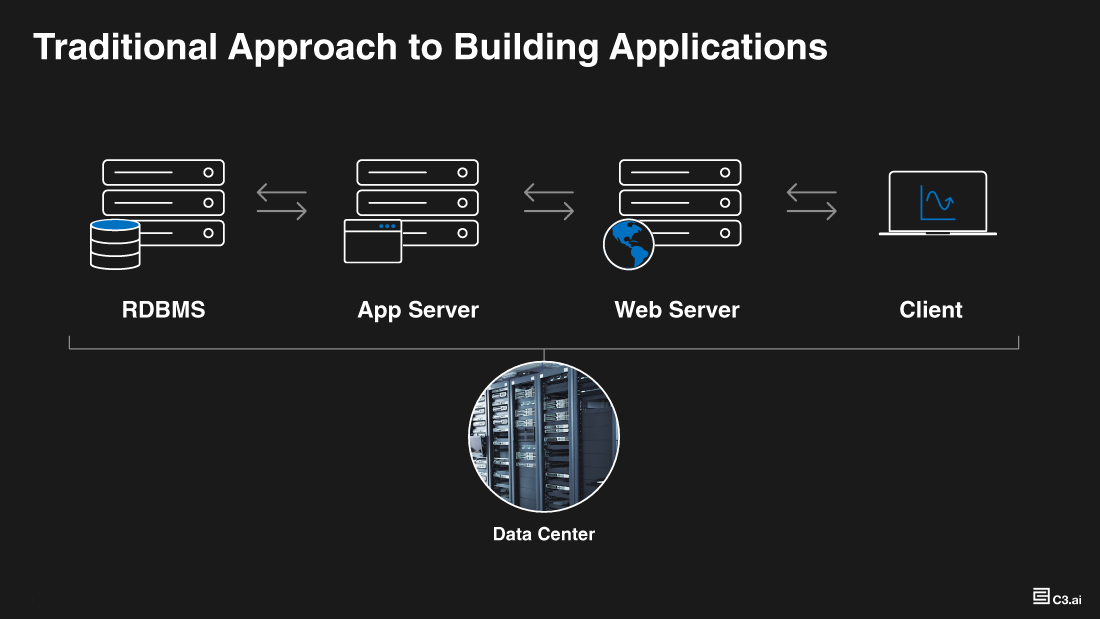

Today, the typical approach to building applications involves choosing a database (typically a relational store, although we increasingly see cases where organizations may choose noSQL stores), piping select datasets into that store, modeling those datasets, leveraging an application server to apply business logic on the data, and expose insights leveraging a UI (user interface) and web server. The approach is simple and powerful for what it was built for.

This class of enterprise software enables some custom workflows, traditional use cases, and simple automation such as RPA (robotic process automation).

Traditional Approach to Building Enterprise Applications

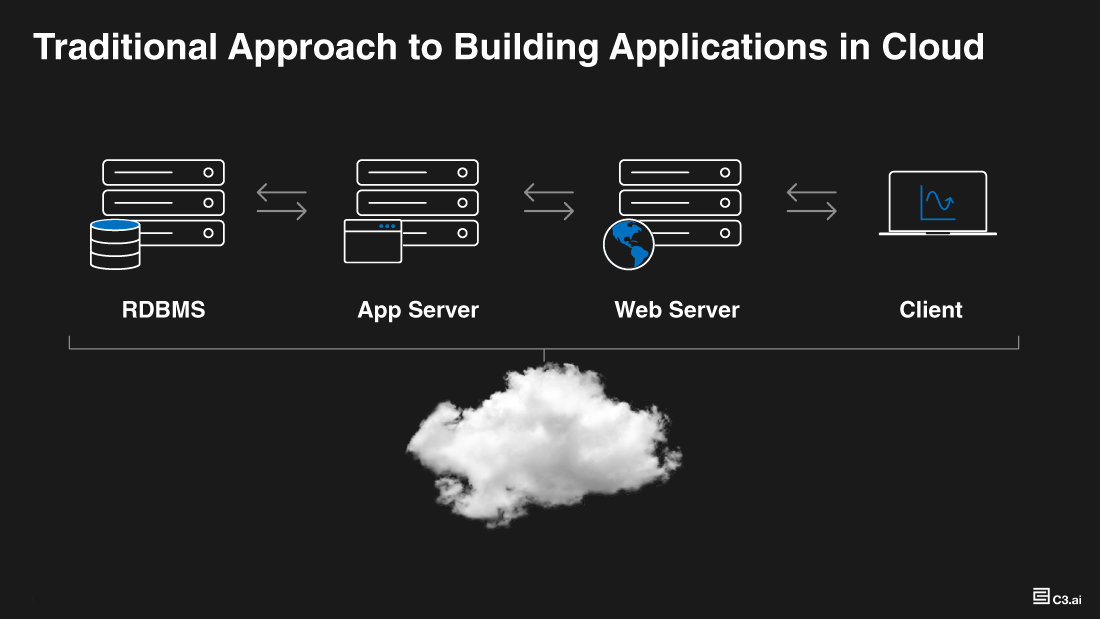

Emergence of the cloud revolutionized enterprise software. Companies benefited from virtually unlimited scalability, much improved flexibility, and greater economies of scale in running their IT operations in the cloud. Practically every organization took advantage of this cheaper, faster, and more reliable technology and moved their IT footprint to the cloud. Yet, the application architecture and the development paradigm stayed practically the same with the exception of enterprise data and applications being hosted in the cloud instead of in physical data centers. Enterprise applications still run on a single database (relational store or RDBMS), apply business logic on the data, and expose insights on a UI to end users.

Approach to Building Enterprise Applications in the Cloud

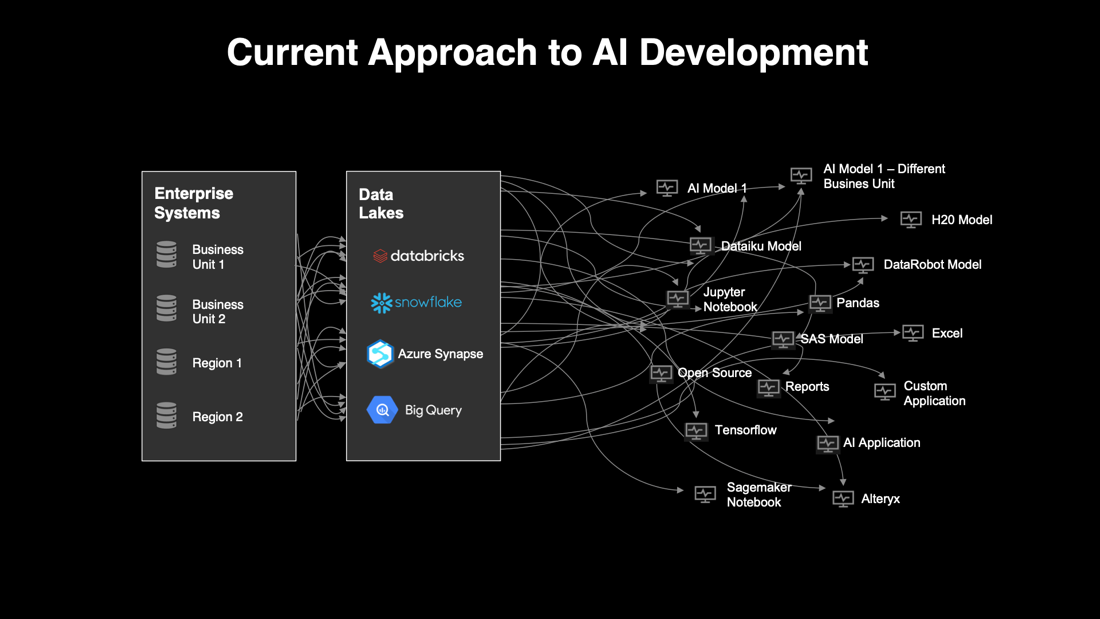

The emergence of AI / ML and other advanced analytics has been an important inflection point. Enterprises have been eager to embrace and unlock business value from advanced analytics. Companies realized that this data and analytic revolution could power the AI/ML based digital transformations of their businesses.

To enable this transformation, organizations often started (seemingly logically) with consolidating their data first in data warehouses. As data volumes grew and cloud compute became more common these became data lakes or scalable cloud data stores.

Many companies have embarked on these data projects, often uncorrelated with the business use case and disconnected from economic value for the business. This divorce of data modeling and consolidation from the business application has resulted in significant (and well-documented) issues for many companies. It is a non-tractable problem to (in one fell-swoop) organize an entire enterprise’s data. Rather, we usually recommend that companies organize, consolidate, and model their data incrementally, and with the business applications or use cases in mind. This specific data challenge is, however outside the scope of this blog post – and probably warrants its own article!

Even as organizations started to consolidate their data into one physical location, another challenge remained. Data science teams started to bolt on analytic models, ML models, or other advanced models working directly against these data lakes.

Because the enterprise data lakes typically had data that was consolidated, but “unmodeled” – each data science team or individual developer – would interpret the data independently, clean up the data as they saw fit, merge, and join datasets as per their own requirements and basically perform a lot of custom work, typically all in various code snippets (e.g., Python scripts, fragments of Pyspark or Scala code).

Most companies hired skilled data scientists and these teams have often been successful at prototyping models and doing effective science. But companies have typically been unable to unlock economic value at scale from these efforts and most AI/ML digital transformations have today been stalled at this prototyping stage.

Two major problems remain unaddressed. First, how do organizations make it possible for data scientists and developers to build reusable data and analytic artifacts, perform standard and approved transformations, publish approved and scalable derived data and analytic products; so that data and analytic management is standardized across the enterprise?

Second, how do end users (who are often frontline business users and subject matter experts) actually access these artifacts and embed them into their day-to-day decision making?

Companies have tried to use reporting tools (e.g., power BI, Tableau) to address this challenge with reports run periodically (e.g., once a week or month or quarter). Reports work for simple use cases, but start to break down where more dynamic results are required, with interactive, automated workflows, write backs to systems of record, and collaboration across users.

Building AI on top of existing data lakes results in a horde of disconnected, point solutions.

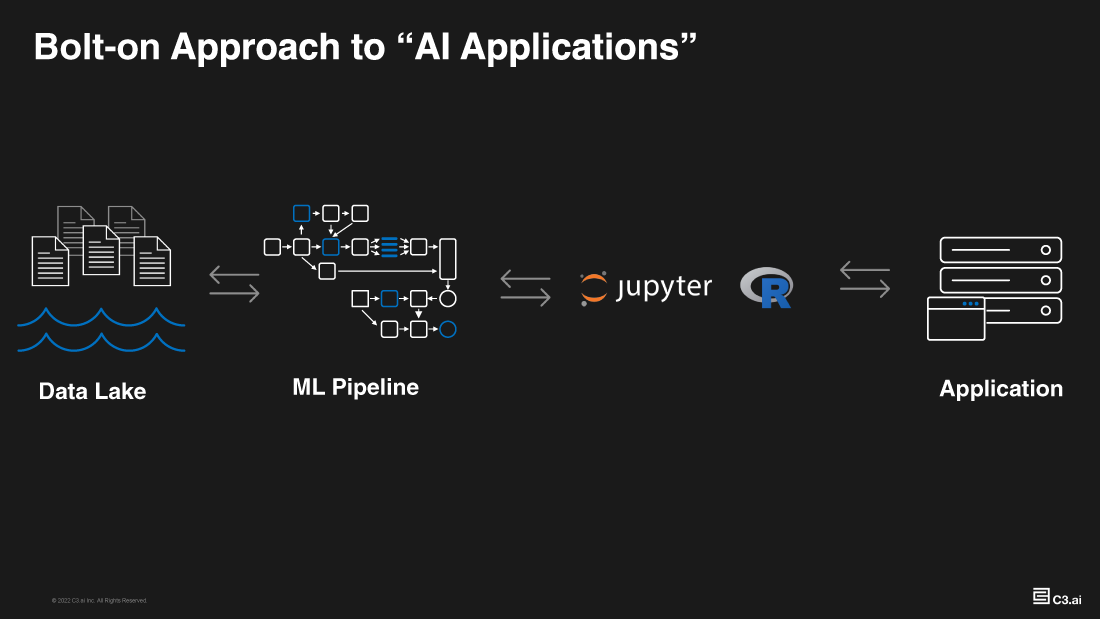

Organizations alternatively try to pipe the results of analytics and ML pipelines into existing business applications. Basically “bolt” on model results (usually via API) into existing applications. While the theory of this approach was sound (see figure below), in practice this approach results in a host of analytic challenges.

Bolt-on Approach to Building AI Applications

The fundamental problem with this approach is that models and analytic pipelines are divorced from the applications. Specific challenges include the fact that existing applications typically cannot handle all the data requirements for the AI/ML algorithms, so only select data and results can be surfaced in these applications.

Divorcing the algorithm pipelines from the application data also causes confusion for the analyst consuming the results of the algorithm as the underlying datasets typically do not match up for more complex use cases (data “impedance-match” problem).

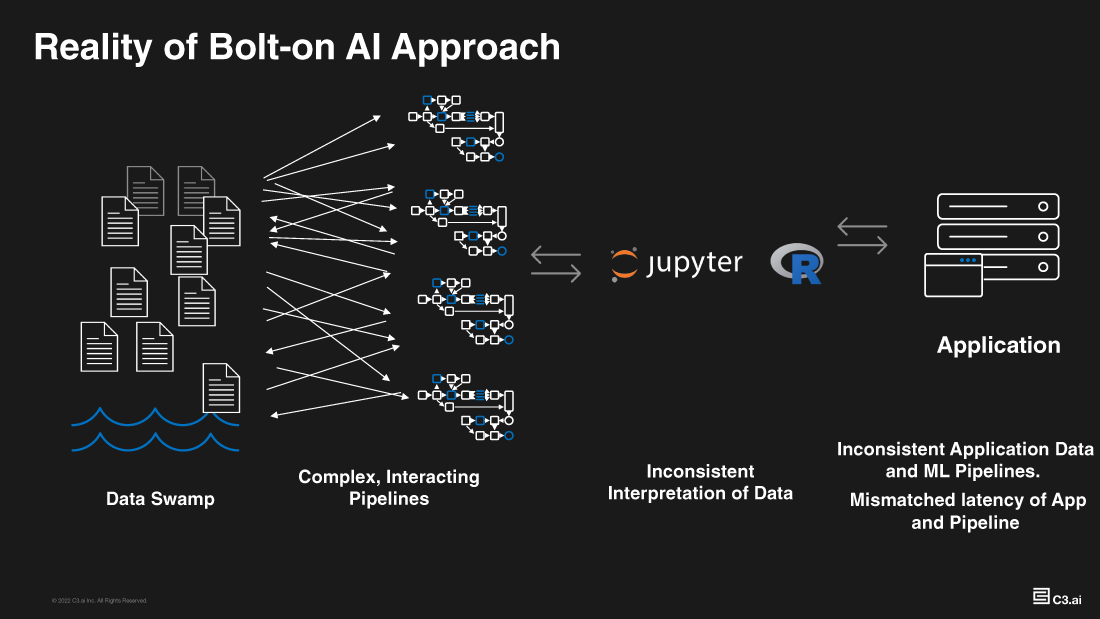

Other problems include:

- The ability to govern and operate models at scale (given models are divorced from applications and their users), ensuring model fairness, lack of bias, and trust into algorithms.

- The ability to serve and operate models in situations where the model context is in the business application; but the entire model and data pipeline is in a different, separate environment.

- Simple data plumbing issues can cause cascading challenges and be impossible to debug (is it the model’s fault or the applications?).

- Model and analytic updates and synchronizing those with application updates becomes exceedingly challenging.

Finally, building trust and adoption of the models becomes very difficult, since the models are just API endpoints. Model interpretability (evidence packages), in app model feedback, model governance and workflows all become very challenging to implement for any use case with any degree of enterprise complexity.

Reality of the Bolt-On Approach

The bolt-on AI approach fails to prove effective and scalable It is no wonder that CIOs, CDOs, and CEOs find themselves frustrated with the pace of change and value capture from the digital transformation of their business.

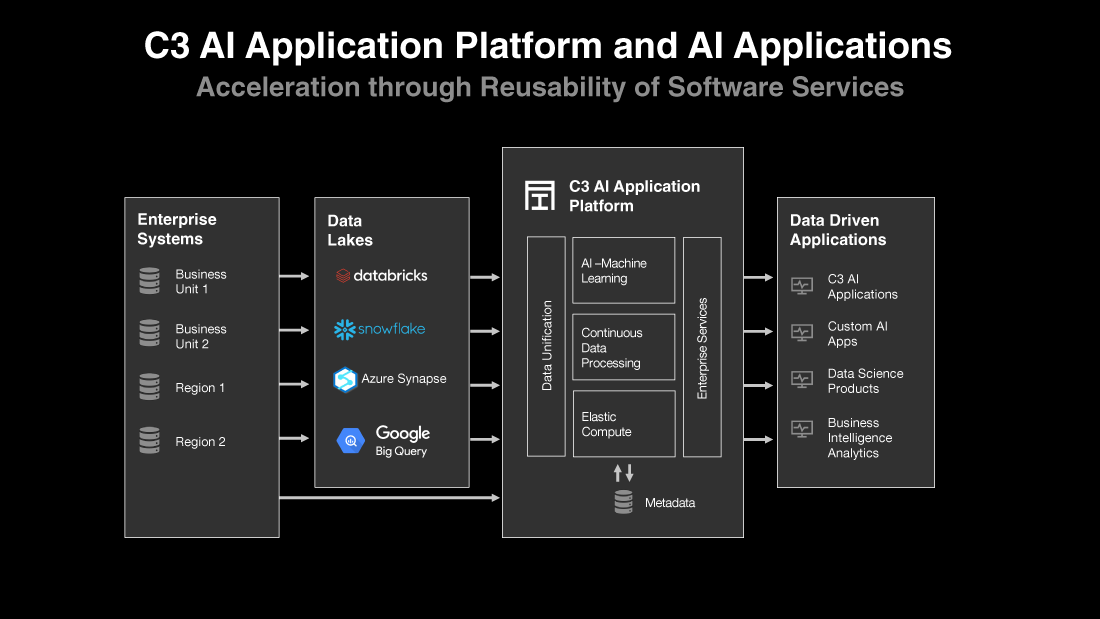

The Concept of the Enterprise AI Application

An enterprise AI application addresses the aforementioned shortcomings and eliminates the divorce between the AI/ML models and business applications. Core AI/ML models live directly within an enterprise AI application along with a common unified data image, business application logic, visualizations, workflows, and integrations into other operational systems. There are a wide range of technical requirements to develop, deploy, and operate enterprise AI applications, some of which include:

- Complex data integrations from different data types that reside in disparate data stores

- Polyglot persistence to store, manage, and extend a unified data image

- Rich model development tooling with language bindings, managed notebook, library integrations, and multiple runtime support

- Comprehensive ML Ops services to monitor, manage, and govern ML models in production

- Application development tooling to rapidly build data models, user interfaces, workflows, and application logic

- A new tech stack that enables reusability, standardization, and scalability across data integration, model and application development, and ongoing operations

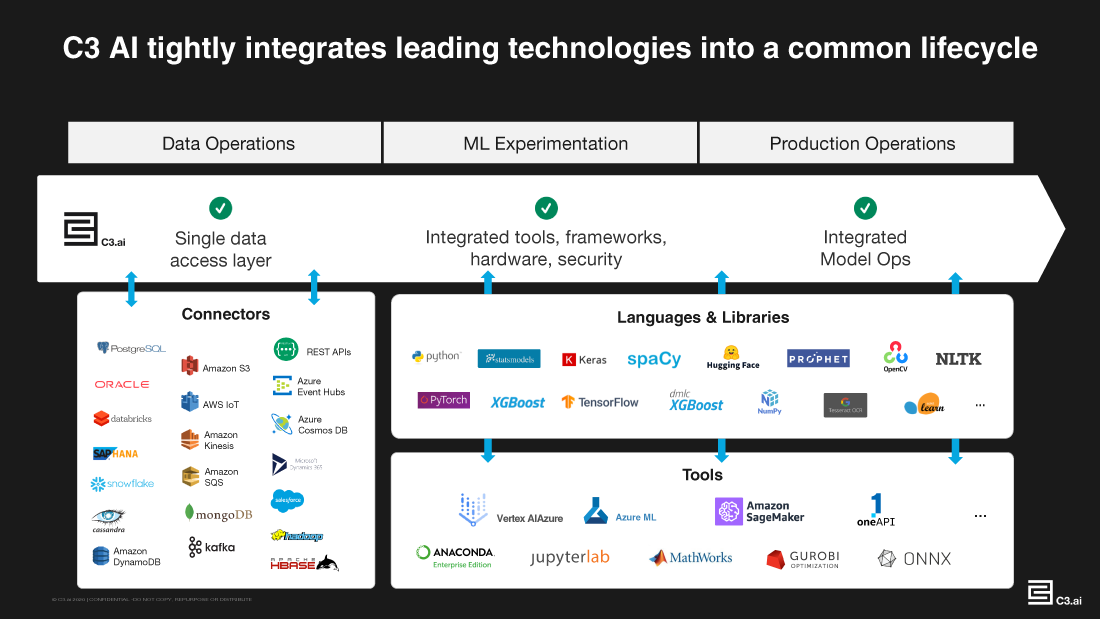

At C3 AI, we built and refined our integrated software stack – C3 AI Platform and C3 AI Applications – with these diverse requirements and challenges in mind for over a decade. The C3 AI Platform is a comprehensive development and operating platform that provides data integration, model development, model Ops, and application development capabilities for large scale enterprise AI deployments. C3 AI Applications provide extensible data models, composable ML pipelines, and prebuilt workflows and UI to accelerate AI deployment.

C3 AI Platform and AI Applications Accelerate Enterprise AI Adoption through Reusability and Scalability

In the following sections, we will dive deeper into each technical requirement and describe how C3 AI addresses them.

Complex Data Integration

As described earlier, the bolt-on AI approach leads to a divorce between application data and AI/ML models that result in a host of challenges ranging from model interpretability, governance, and auditability to data sync, ingestion, consistency, and access issues. An enterprise AI application needs to connect to disparate data sources, unify different data types (structured and unstructured), and maintain a current image with newly available data. This unified data is then served into AI/ML models and accuracy of AI predictions correlate directly with richness and diversity of the unified data.

One recent example we have experienced this with is for revenue forecasting using C3 AI CRM. Working with a Fortune 100 client, we have recently demonstrated best-in-class 3% revenue forecast error using AI (vs. 20% with traditional CRM). For this specific problem and the revenue forecasting algorithm, C3 AI CRM unified data from internal and external data sources. We observed that 7 out of the top 9 contributing features for the AI revenue forecast came from data sources outside of the existing CRM system. This data included external data such as news, stock indices, and market price index information.

A comprehensive enterprise AI strategy will target many different business functions such as operations, marketing & sales, customer service, supply chain, manufacturing, risk, and research & development.

The use cases will be diverse ranging from predictive asset maintenance and supply chain risk mitigation to customer churn prevention, new product innovation, and many other high-value enterprise AI use case. This extensive universe of AI use cases will each require different data science techniques and machine learning modeling approaches that result in a host of different type of data requirements.

While some of the enterprise AI use cases (e.g., regression problems) will require integrating a collection of structured data types such as tables, streaming timeseries, and asset metadata; other use cases (e.g., natural language processing and computer vision) will be built and operationalized using unstructured data such as text, video, and audio. Structured and unstructured data are different in nature and are stored differently, hence data science teams will need to utilize data persisted across multiple databases. Integration frequency across data sources will also vary wildly based on availability and refresh rate of data as well as model inference and downstream business use case requirements. While batch integration might be sufficient for some of the data sources and business use cases (such as customer churn prevention), other use cases (such as payment fraud detection) might require streaming data integration in near real-time. A bolt-on AI approach cannot address these wide range of requirements and complexities, hence cannot scale and cater to the broad range of AI use cases required across the enterprise.

The C3 AI Platform was built and refined over the years with these diverse data integration requirements and challenges in mind. The C3 AI Platform can integrate all structured and unstructured data types persisted across disparate databases and enables multiple forms of integrations, including batch and near real-time stream integration, via pre-built connectors to most common databases and enterprise systems. Developers and data scientists can choose to persist the unified data directly on the C3 AI Platform or virtualize data available in existing data lake investments.

Although not required for all enterprise AI use cases, a significant portion of operational use cases rely on timeseries data coming from sensor networks, weather records, economic indicators, events, and many other sources. Ability to ingest and utilize streaming timeseries data is an overwhelming task. The C3 AI Platform provides native timeseries support that simplifies and automates the ingestion, cleansing, normalization of input timeseries data and embeds these data into model development steps and downstream enterprise AI applications automatically.

Machine Learning Development and Deployment

Machine learning model development is the most iterative step across the enterprise AI lifecycle. Data engineers and data scientists continuously work together to define the features that best represent the problem at hand and develop the machine learning model with the highest performance metrics. Across this iterative process, data science teams run many experiments testing potential machine learning features and various modeling techniques across a wide array of libraries such as Keras, PyTorch, TensorFlow, and others.

The C3 AI Platform presents an open framework for data science teams and significantly accelerates model development and the iterative experimentation process with readily available multi-framework composable ML pipelines. The library of more than 30 pre-built ML pipelines spans across deep learning, natural language processing, forecasting, and tree-based model pipes and are developed by C3 AI expert data scientists working on a wide range of enterprise AI use cases across many industries. Throughout the development lifecycle, data science teams leverage a unified data image, build reusable data and analytic artifacts, perform standard transformations, and publish approved data and analytic products enabling rapid development and scale.

C3 AI Application Platform Presents an Open Framework for Rapid Development

What follows model development is the model deployment step that is arguably even more complex. Large enterprise-scale deployments of machine learning models impose a host of varying requirements ranging from different deployment schemas such as champion-challenger deployments, randomized A/B tests, and shadow deployments to ongoing inference and model performance monitoring to ensure ongoing value creation from the production enterprise AI applications. The population of production machine learning models are diverse in the type of data they use, third-party libraries they integrate with, hardware profiles they are optimized for (CPU vs. GPU), and the inference workloads they imply. It is practically impossible to satisfy these diverse requirements with the bolt-on AI approach and manage a significant population of production models without a well-thought ML Operations practice.

The C3 AI Platform provides a comprehensive model deployment framework that provides the flexibility and agility required to enable enterprise-scale AI deployments. The model deployment framework supports various deployment schemas, provides multiple asynchronous runtimes and hardware profiles for inference, and ensures sustained value delivery from AI applications through ongoing model performance monitoring. This ensures that AI insights are exposed to business users and embedded directly into business processes with interpretable evidence packages ensuring fairness, lack of bias, and trust into AI models.

Orchestration Across All IT And OT Systems

Having multiple production enterprise AI systems and applications in place requires orchestration across multiple databases, various enterprise IT and OT systems, different ML libraries and runtimes, and different processing paradigms. Similar to how data can reside across multiple databases and be integrated in varying frequencies, data processing and model inference requirements can change dramatically across different enterprise AI applications. While a predictive maintenance application that monitors asset performance and health requires continuous processing of the machine learning features and predictions; batch processing might be sufficient for a demand forecasting application that predicts future customer demand for microchips on a weekly basis.

The C3 AI Platform provides a common orchestration and abstraction layer that sits atop all enterprise IT and OT systems and enables utmost scalability for at-scale deployments of enterprise AI applications. The enterprise AI platform supports continuous and batch processing and provides elastic scale out and in based on model development and operations workloads and queues.

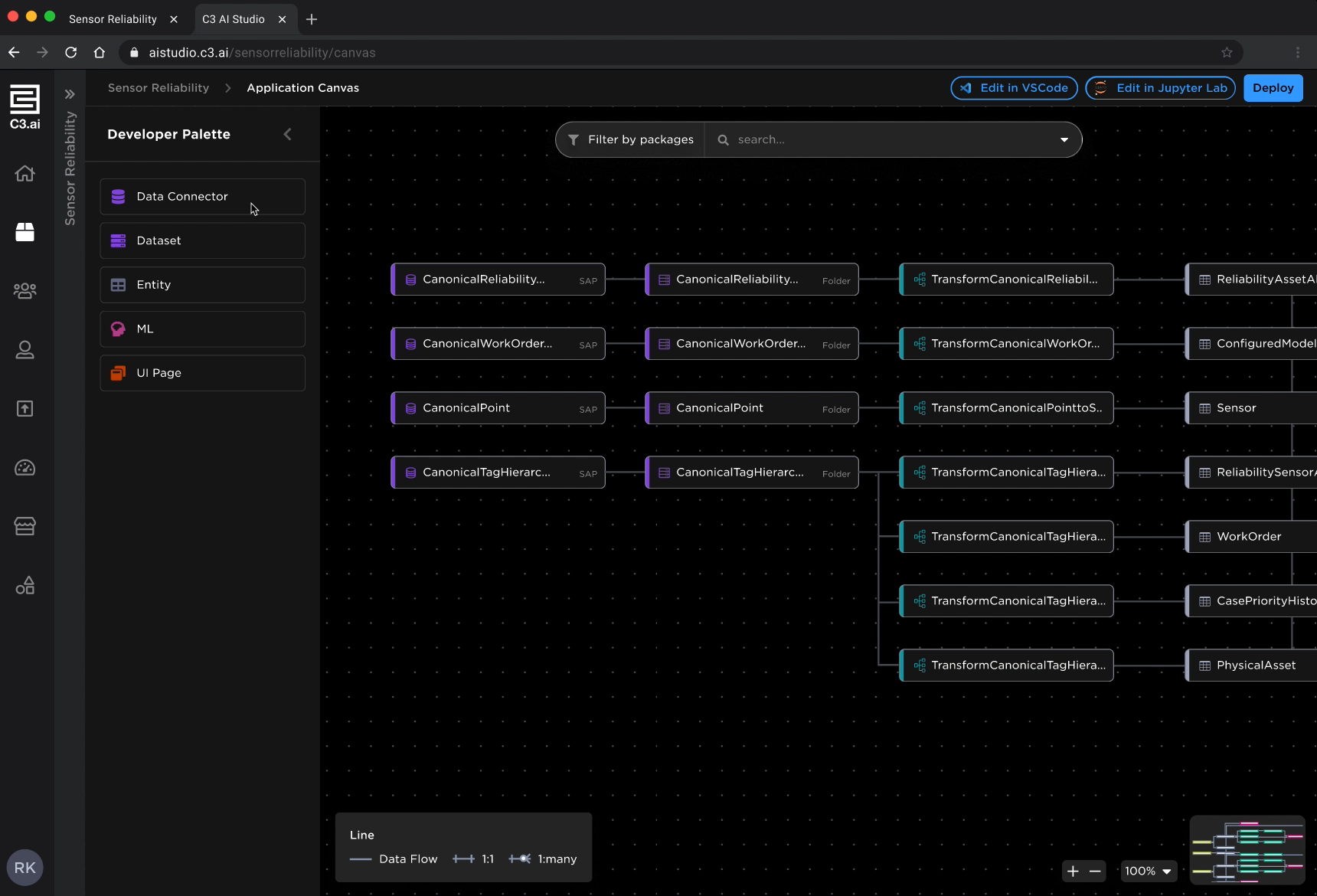

Application Development

Accelerating application development is equally important in ensuring that enterprise AI delivers concrete value for the business. Parallel to model development and data science work, development teams work on defining application logic, developing user interfaces, and building integrations to existing business applications. The C3 AI Platform provides a low-code / deep-code development environment, C3 AI Studio, for developers of all complexity and provides tooling and configurable components to rapidly develop user interfaces, visualizations, and workflows that ultimately accelerate deployments of enterprise AI applications significantly.

C3 AI Studio Provides a Low-Code Application Canvas to Rapidly Develop AI Applications

The complexities and requirements of enterprise AI applications are not trivial and keep evolving at a rapid pace. The bolt-on AI approach cannot address these complexities and is not a viable path for enterprise-scale AI deployments as shown by many failed experiments.

Over the past decade at C3 AI, we have significantly invested in our product portfolio to enable enterprise-scale AI deployments and have built the largest production footprint of enterprise AI globally. We continue to work with the world’s largest and most iconic organizations to deliver the transformative power of enterprise AI.

About the Author

Turker Coskun is a Director of Product Marketing at C3 AI where he leads a team of Product Marketing Managers to define, execute, and continuously improve commercial and go-to-market strategies for C3 AI Applications. Turker holds an MBA from Harvard Business School and a Bachelor of Science in Electrical Engineering from Bilkent University in Turkey. Prior to C3 AI, Turker was an Engagement Manager at McKinsey & Company.

Nikhil Krishnan, Ph.D., is CTO, Products at C3 AI where he is responsible for product innovation, strategy, and AI/machine learning. Over nearly a decade at C3 AI, Dr. Krishnan has developed deep experience in

designing, developing, and implementing complex, large-scale enterprise AI and ML products and solutions to capture economic value across financial services, manufacturing, oil and gas, healthcare, utilities, and government. Dr. Krishnan earned a bachelor’s degree from the Indian Institute of Technology, Madras, and holds a master’s and Ph.D. in mechanical engineering from the University of California, Berkeley. Prior to C3 AI, Dr. Krishnan was an Associate Principal at McKinsey & Company.